The Invisible Work: What Separates a Six-Month Software Project From a Six-Year One

This is a post about the work on a software project that does not produce a screenshot. It is the work that decides whether the project is worth anything in year two.

TL;DR: Software projects are sold on the visible work, screens, features, demos. The invisible work, tests, migrations, error handling, audit trails, data integrity, is what decides whether the project still works in eighteen months. Teams that skip it ship faster and lose their customers slower. Teams that invest in it ship slightly slower and still have customers in year five. The difference is not quality-for-its-own-sake. It is the second-order cost of getting called at 11pm because something you built three years ago just corrupted someone’s books.

The Foundation Problem

A builder does not get paid extra for the foundations. The client sees the finished walls, the paint colour, the doors. The foundations sat in the ground three months ago and are now covered by concrete. If you asked the builder to skip them, you might save 15% on the build, but you would not be in the building for very long.

Software has the same problem with a different shape. The visible work is the UI, the features, the dashboards. The invisible work is everything that decides whether the visible work keeps working when things change. And clients, unless they have been burned before, often do not know the invisible work is there to ask about.

We have been doing this long enough that we can tell, usually within an hour of looking at a codebase, whether its previous team was doing the invisible work or not. Not from the code quality directly, but from the secondary signals: do the tests run? Do they test anything useful? Are migrations reversible? Are there audit trails? Are errors logged with enough context that a future engineer could actually debug them? If the answer to more than one of these is no, the cost of maintaining the system for the next year is going to be several multiples of what it should be.

What the Invisible Work Looks Like

Tests

A test is not a checkbox. A test is a sentence that says, “this is what I believe this code does, and here is how we can verify it.” The invisible work is writing tests that assert the things that matter, not that the code executes without error, but that a submitted invoice actually marks the stock as reserved; that a cancelled document actually reverses the ledger entries; that a credit note actually decrements the right account.

The tests that catch real bugs are rarely the ones that test happy paths. They are the ones that test the weird corners: what if the user cancels the invoice while the background job is in flight? What if two users submit the same quote at the same time? What if the migration runs on a database where a previous patch partially failed?

When we rebuilt a GST inclusive pricing feature recently, it shipped with 53 test cases. Most of them were about edge behaviour: amend scenarios, multi-currency, manual GST override, orphan cleanup, lifecycle reversal. You could test the feature “works” in four cases. You write the other forty-nine because somebody, somewhere, is going to hit them, and they are going to be an accountant who cares very much about the answer.

Migrations That Survive Old Data

Every non-trivial software project eventually needs to migrate data. A new field is added. An old field changes meaning. A parent-child relationship is restructured. The invisible work is making the migration survive data that has been accumulating for years under slightly different assumptions.

We recently migrated a warehouse-management customer off a misconfigured GL structure that had been accumulating errors since go-live in mid-2025. Every warehouse was posting to a single catch-all account instead of per-warehouse accounts. The fix, reposting 3,600 historical stock transactions to the correct GL accounts, ran across multiple scheduler cycles, against closed accounting periods that had to be temporarily reopened, in a specific order, without breaking any of the monthly journal entries that had been manually adjusting around the problem.

Invisible work, because the end state looks like it should have been like that from the start. No dashboard is different. No feature list changed. But the stock GL now reconciles to the ledger exactly, month on month. And it will continue to, because the repost migration was written to handle orphan entries, batch tracking gaps, and opening-balance double-counting, all of which existed in the real data.

Error Handling That Preserves Context

Error handlers come in two flavours. The useful kind catches a specific error, logs it with enough context that a future human can understand what happened, and either recovers or propagates with that context preserved. The useless kind is a bare except that swallows everything and logs “something went wrong.”

The useless kind is extremely tempting because it makes the immediate problem go away. The code no longer throws. The user does not see a stack trace. Shipping has happened. Six months later, the integration will fail silently and nobody will notice for weeks.

We debugged a food manufacturer’s WooCommerce sync recently where the root cause was a single typo in a doctype reference, hidden inside an exception handler that swallowed the error. Months of orders had been silently re-fetched every hour while four real orders had never made it into the ERP. If the exception handler had logged “failed to advance last_sync_date: doctype ‘WooCommerce Settings’ does not exist,” somebody would have seen it the first week.

Logging is cheap. Silent exception handlers are one of the most expensive things in a codebase.

Audit Trails

Financial software without an audit trail is not financial software. It is a spreadsheet with better validation.

Every financial action we take, every Xero push, every payment reconciliation, every ledger repost, every tax calculation, every period close, writes a row to a log. The log is a separate concept from the error log. It records what happened, in what order, by whom, against which documents, with what outcome. When an accountant calls us three months later with a question about why a payment was allocated the way it was, we can answer from the trail.

Audit trails are invisible work because they are never the feature. They are infrastructure. But they are the difference between answering a support question in five minutes and answering it in five hours of forensic work (or not being able to answer it at all).

Data Integrity

The quietest kind of invisible work is making sure the data stays consistent when things go wrong. Idempotent operations that survive retries. Transactional boundaries that prevent half-complete state. Locking that prevents two users from both succeeding at something that should only succeed once.

We build integrations where the source of truth for “was this push successful” is the remote system, not local state. We build reconciliation routines that repair drift rather than panic about it. We build background jobs that can crash at any point and, on retry, do no harm. This is not glamorous work. It is the kind of work that means the system is still correct on a Monday morning after a Sunday night outage, which your users will never know happened.

Why Teams Skip It

The incentives are bad. Visible work gets budget. Invisible work does not. A feature list has customer-visible items; a test suite is not on the list. A contract specifies “build X”; it does not specify “X survives a worker crash at 3am.”

Teams that skip the invisible work ship faster in the first three months. They look more productive. They are more productive, in the sense of producing more visible output. The bill comes due later, and it comes due on somebody else’s watch.

Clients often do not notice the difference until they are a year in, which is far enough past the initial decision that it feels like bad luck rather than a bad choice. The original team has moved on to the next project. The maintenance team is picking up a codebase where every feature is separately 80% done, but in aggregate the system is 40% functional because the parts that should have tied everything together were never built.

The Honest Version of This

We do the invisible work because we have been on the receiving end of not doing it.

We have been the engineering team called to rescue an integration that had been “working” for a year. We have opened codebases where the test suite contained three tests and all of them asserted True. We have traced financial reconciliation errors back to exception handlers that caught Exception as e: pass. We have seen migrations that shipped to production on the assumption that the data would always be in the shape the developer imagined, rather than the shape it actually was.

Every one of these was fixable. None of them was cheap. And all of them were the result of decisions three years earlier to ship something that looked finished without the work that would keep it finished.

What This Looks Like for a Client

If you are engaging a software team, these are the questions we would ask, not for signalling, but because the answers tell you whether the system you are paying for will still be worth anything in eighteen months:

- What tests get written for this feature? You want an answer that includes edge cases, not just happy paths.

- What happens when this integration fails? You want an answer that describes logging, alerting, and recovery, not just “it’ll retry.”

- What’s the audit trail for this? If it is a financial feature and there is no audit trail, that is a problem.

- How does the migration handle data that’s already in weird states? If the answer is “what weird states?”, the team has not thought about it yet.

- If I asked you to ship this a month earlier, what would you cut? The honest answer names specific invisible work. The dishonest answer says “nothing, we’d just work harder.”

The Bottom Line

A software project that costs 30% more to build and lasts five times as long is not a more expensive project. It is a vastly cheaper one. The industry is full of platforms and systems that were “done” in six months and had to be thrown away in year two. The ones still running in year five are the ones where somebody, quietly and unromantically, did the invisible work.

This is not perfectionism. It is not over-engineering. It is professional discipline, the same way a builder uses proper foundations because they know how buildings fail. We think of it the same way. It is the reason the software we build is still running years later, doing the thing it was built for, with the same reliability it had on day one.

About HighFlyer

HighFlyer is an Auckland-based technology company run by two technical founders. We build custom software, AI systems, and integrations for New Zealand and Australian businesses. The software we build is the kind you do not have to think about after it ships, because the invisible work is already done.

Learn about our approach or get in touch if you want to talk through what a properly-built system for your business would look like.

Tags

About the Author

Imesha Sudasingha

Head of Engineering

Imesha is the Head of Engineering at HighFlyer and a member of the Apache Software Foundation with 10+ years of experience across integration, cloud, and AI. He cares a lot about the invisible work that keeps production systems standing.

Recent Posts

Categories

You May Also Like

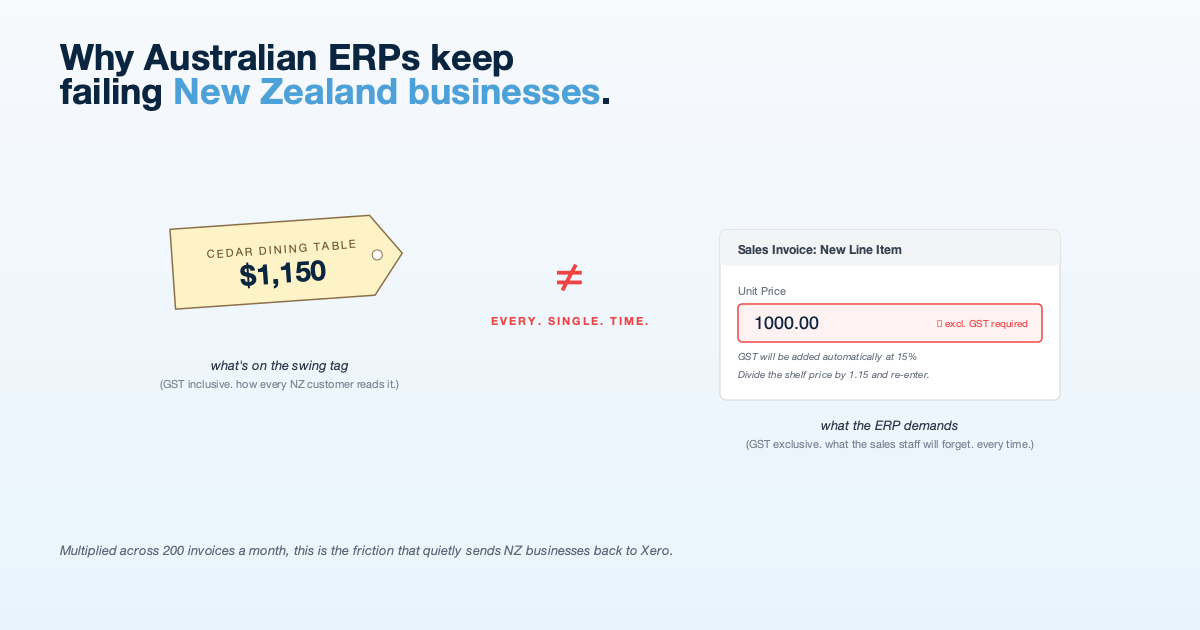

Why Australian ERPs Keep Failing New Zealand Businesses

NZ businesses think and invoice in GST-inclusive terms. Most cloud ERPs do not. The mismatch creates friction that shows up...

Read More

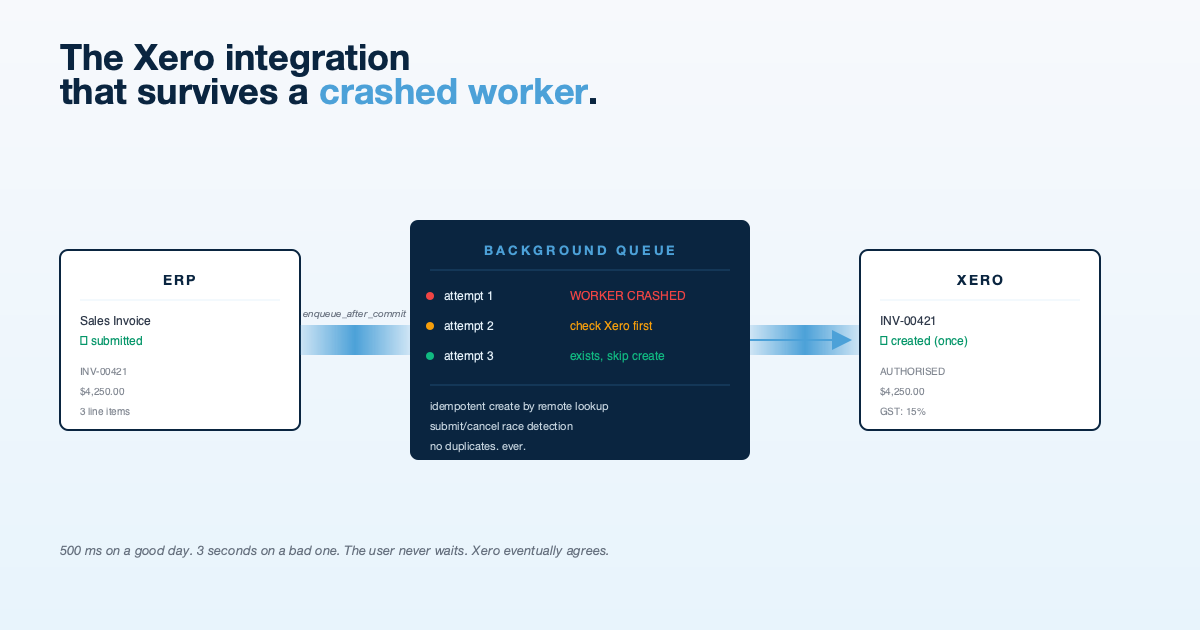

The Xero Integration That Survives a Crashed Worker

Tutorials show you how to call the Xero API. Production shows you what happens when the worker dies halfway through....

Read More

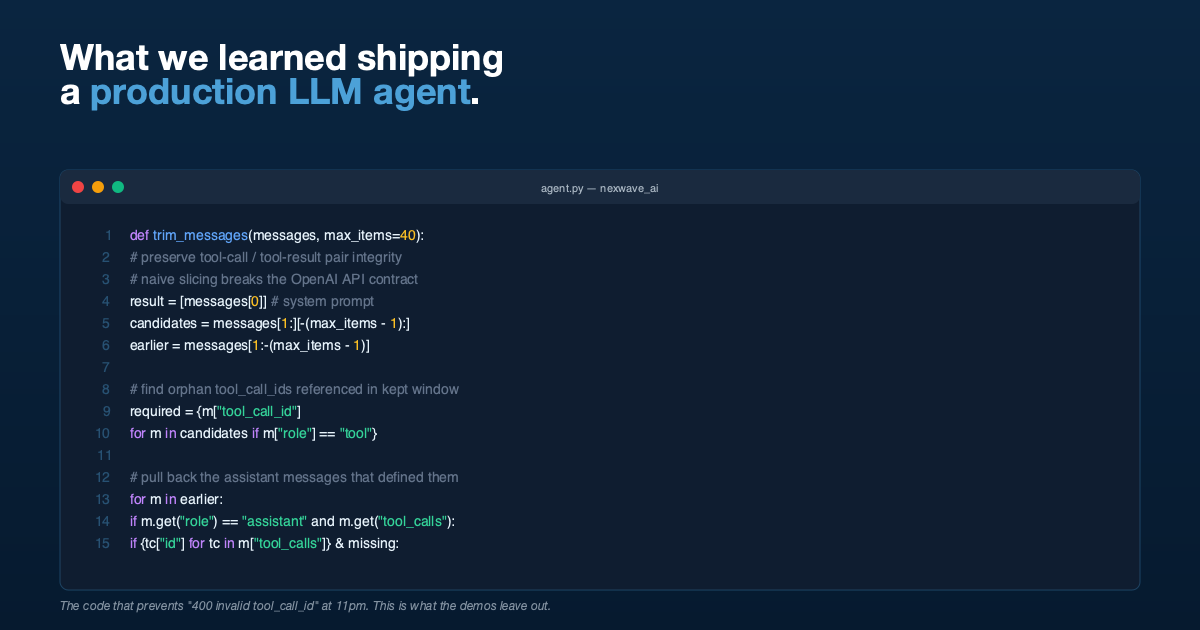

What We Learned Building a Production LLM Agent That Writes Its Own ERP Queries

Tool-call integrity during history trimming, delegated arithmetic, permission pass-through, and why demos lie. Lessons from shipping an agent into production.

Read More