How We Actually Use AI in Production Engineering

Most content about “AI for engineering” is either hype (“AI will replace developers”) or demo-floor theatre (watch me generate a TODO app with a prompt). Neither is useful if you are running production systems. The real question, for a consultancy that ships integrations and ERP customisations, is: what does AI actually change about how we do our jobs? This is the honest answer, with three specific cases from the last few months.

TL;DR: AI is not writing production code for us. It is shortening the investigative half of engineering, the part that used to mean days of spreadsheet exports, pivot tables, and squinting at logs. A recent example: a customer had stock GL accounts that had disagreed with the stock ledger for eight months. 3,600 historical transactions. Four overlapping root causes. A hidden double-counting bug in the opening balance import. One engineer, one working session with AI alongside, and every account matched to the cent. The engineer still owned every decision. AI did the sorts of work that would have otherwise taken a week of manual effort.

What This Is Not

Before the specifics, the guardrails.

- AI is not queried against production. Our standard pattern is to take an up-to-date backup of the customer database and restore it to a local environment. AI works against that restored copy. Typically the backup is from within the last 24 hours, which is current enough for any forensic or historical investigation. Humans apply the resulting fixes to production after the session concludes.

- AI does not write code that ships unreviewed. Every commit goes through the same review pipeline as human-authored code. Tests, type checks, and the usual CI hurdles apply. AI does not get a fast lane.

- AI does not replace business decisions. When the investigation reveals a choice (cancel and rebook, or amend? Include credit-balance customers in statements, or not?), the engineer talks to the customer. AI can lay out the trade-offs. It does not pick one.

With those out of the way.

Case One: Eight Months of Accounting Drift, One Session

A customer’s stock general-ledger accounts and their stock ledger had not matched since go-live, eight months prior. Every month the accountants had been quietly posting manual journal entries to paper over the gap. That workaround had compounded the problem rather than fixing it. By the time we looked at it, the situation was:

- A four-digit discrepancy between the Ingredients, Packaging, and Finished Goods GL accounts and what the stock ledger said they should be.

- Dozens of manual journal entries, some correcting, some making things worse.

- A persistent “phantom” balance against a dummy customer that no one could explain.

- Three cancelled stock entries whose stock ledger had been reversed but whose GL entries had never been.

- A failed item valuation repost that had been stuck for months.

A year of anyone’s life could have disappeared into that investigation. The reason it did not is the workflow.

Step 1: Take a Backup, Restore Locally

We never let AI touch production. The first step of any investigation like this is to take a recent customer backup (in this case, taken the evening before) and restore it to a local sandbox. That sandbox has the full customer data, the full customer schema, and none of the customer’s running integrations. We can ask anything, run anything, try anything, and the customer never sees.

The backup being from the previous day matters. For forensic work we need every transaction the customer has made up to the point of investigation. A week-old backup would have missed the two JEs the accountant posted yesterday, which might have been exactly the ones creating the phantom balance.

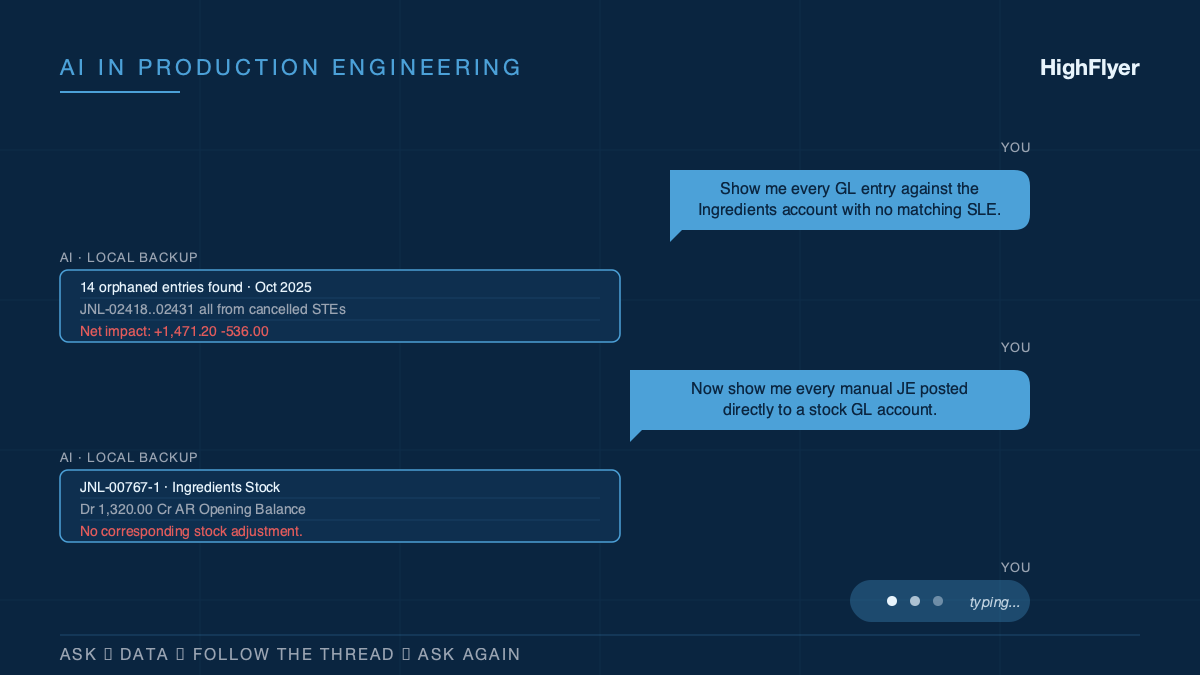

Step 2: Ask, Data, Follow-Thread, Ask

With the sandbox ready, the session pattern is:

- Ask a question.

- AI queries the sandbox (via a safe, read-only database connection) and returns the data.

- The engineer reads the result, forms the next hypothesis, and asks the next question.

The first question was: “Show me every GL entry against the Ingredients account that has no corresponding Stock Ledger Entry, ordered by date.” AI wrote the query, ran it, and surfaced 14 orphaned GL entries from cancelled stock entries in October. That lead was too clean to be the whole story.

Next: “Of those orphaned GL entries, what is the total debit/credit impact on each stock account?” $1,471 overstating Ingredients, $536 understating Finished Goods. Numbers that matched one of the gaps exactly.

Then: “Now show me every manual JE posted to a stock GL account.” That surfaced JNL-00767-1, a JE posting $1,320 directly to Ingredients with no stock adjustment. Orphan. That explained a chunk of what was left.

Pattern kept going. Three packaging items had Bin vs SLE drift of $1,128.63 that turned out to be stock-value recalculation issues. A $0.10 rounding drift on one Finished Goods item needed a targeted repost of 14,969 stock ledger entries. And, in the opening balance import from the previous accounting system, a “Reverse Opening Balances” journal entry had been debiting an AR temp account every time the opening invoices were imported, even though the invoices themselves already offset it. That was the phantom. A dummy customer sitting on a $295k AR credit that nobody could find because it was spread across months of imports that looked innocent.

Step 3: Simulate Every Fix Before Touching Anything

For each root cause, the next question was “if I apply this specific correction, what does the stock account look like afterwards?” AI built running-balance simulations against the restored backup. We got to see the post-fix state for all four stock accounts before a single production change.

Only after every simulation showed zero drift did anything get applied to production. The human applied it, manually, with a documented rollback.

Outcome

End of the single session:

- Every stock GL account matched the stock ledger exactly. $0.00 drift across Ingredients, Packaging, Finished Goods, and In Transit.

- The $295k phantom AR credit identified, explained, and resolved.

- The stuck item valuation repost diagnosed and restarted (one warehouse had broken batch tracking from the opening-stock import).

- A documented remaining-action list for the accountant (one Material Transfer from an old warehouse, a small SUSPENSE residual to clear).

What would have taken a week of an engineer plus a week of an accountant took a few hours of one engineer, mostly because AI did the shape of work that used to be “export to CSV, build a pivot, find the outliers, export again.”

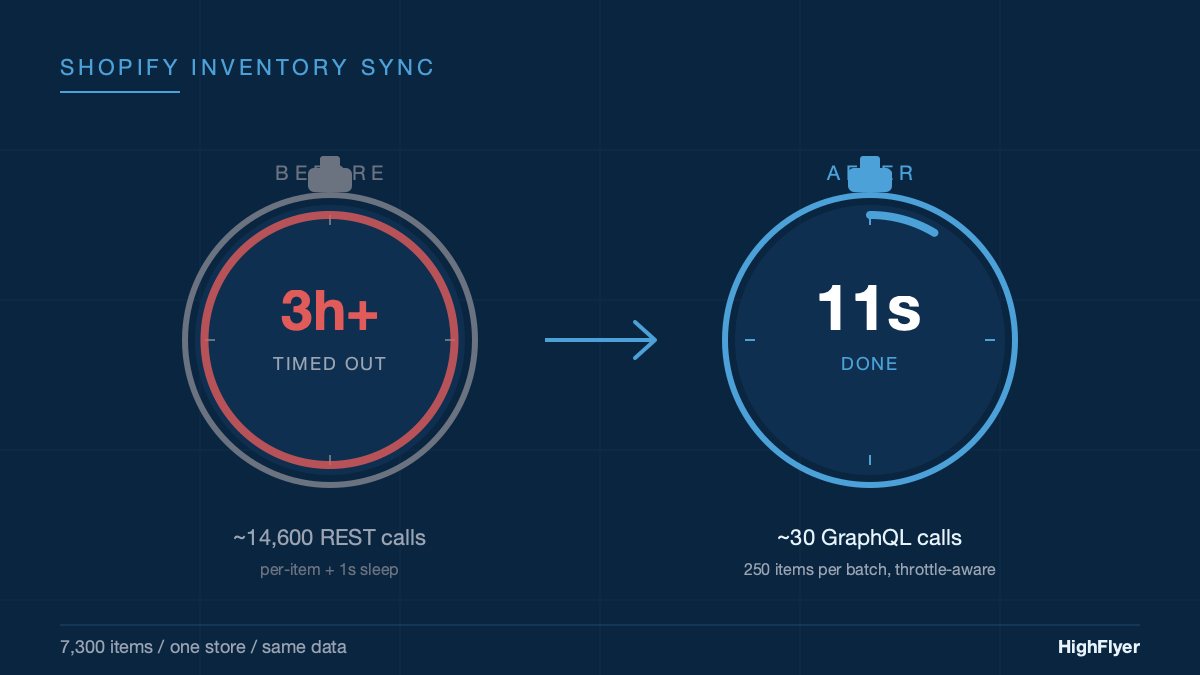

Case Two: The Three-Hour Timeout That Was Never a Timeout

Different customer, different problem. A Shopify inventory sync had been timing out. We had already raised the job timeout three times, from 30 minutes to 60 minutes to 3 hours. It was still timing out on the largest store.

The temptation, in that situation, is to raise it again. AI did not fix the problem; it asked a different question.

“How many API calls does this sync make for the largest store?” About 14,600. “At the current rate-limit sleep of one second per call, what is the theoretical minimum run time?” Over four hours. “Can a leaky-bucket REST API fundamentally support sub-hour sync for a store of this size?” No.

The whole timeout-bumping exercise had been treating the dial as the thing to adjust. The AI-assisted line of questioning reframed the timeout as a diagnostic signal. We rewrote the sync to use Shopify’s batched GraphQL mutation, and the same store now syncs in eleven seconds. Full write-up of that rewrite is in From 3 Hours to 11 Seconds.

The value of AI here was not code generation. It was the willingness to ask the boring arithmetic question that a fatigued engineer had stopped asking.

Case Three: Four Missing Orders and One Character

A food manufacturer thought their orders were not syncing from their Shopify-adjacent storefront into their ERP. The integration logs said “running successfully.” The customer was right; the logs were wrong.

The path to the root cause was another ask-data-follow sequence:

- “Show me the most recent successful run of the WooCommerce sync.” Every hour for two weeks. Never an error.

- “Now show me the

wc_last_sync_datevalue over time.” It had not advanced since go-live. - “What doctype is the sync writing that timestamp to?” A doctype that did not exist in the schema.

- “What does Frappe’s

db_setdo when you write to a doctype that does not exist?” It silently ignores the write and returns no error.

Single character wrong in a doctype name. Every hour, the sync was re-fetching all 3,500 historical orders because it thought it had never successfully synced. It timed out before reaching the tail of the queue, which was where the four genuinely missing “retry” orders were sitting.

AI did not find the bug. An engineer who had read the db_set source would have found it the same way. AI made the reading faster.

The Pattern

If you look across the three cases, the workflow is not about AI writing code. It is about AI compressing the investigation loop:

- Engineer asks a question.

- AI queries the restored sandbox (or reads source code, or parses logs) and returns structured data.

- Engineer interprets, forms the next hypothesis, asks again.

Each iteration used to cost minutes of context switching. Export, munge, pivot, paste back into the IDE, think, repeat. Now each iteration is a typed sentence. Twenty iterations in a session instead of five. You get to the bottom of problems you would previously have abandoned as unfixable.

What This Changes About the Work We Take On

Before this became part of our workflow, there was a class of engagement we routinely turned down: “our accounts have been wrong for months, we do not know why, can you come and figure it out.” Those projects looked like two weeks of work before you even knew what the work was. The uncertainty made them difficult to scope and unpleasant to price.

Now those investigations are plausible inside a day, sometimes less. The upstream effect is that we can take on:

- Forensic data reconciliation on legacy systems.

- Migrations out of platforms where the source data has drifted from the source of truth.

- Performance investigations on pipelines that have “always been slow.”

- Compliance audits against transactional systems where the documentation is out of date.

Not because AI makes us magical. Because the expensive part of these projects (the investigative phase) is now smaller than the implementation phase, rather than the other way around.

What We Tell Clients

When a prospect asks whether we “use AI”, the answer is yes, with context.

- We do not ship AI-generated code without human review.

- We do not connect AI to production. It works against a restored local copy of the database.

- We do not use AI to generate your business logic. The logic is yours; we build it with you.

- We use AI the way a careful engineer uses a calculator: a tool that makes specific parts of the job faster, and that still requires the engineer to understand what the tool is doing and whether the answer looks right.

If a consultancy tells you AI is their value proposition, be careful. AI is a capability. The value is still whether the team can diagnose your actual problem, architect the right solution, and ship something that works in production at 9am on a Monday. Hire for that. Assume AI is table stakes in every modern engineering shop and evaluate the people.

Where This Goes Next

Two things will get better.

Faster sandboxing. Restoring a customer backup, masking sensitive fields, and pointing a local environment at it is still a 20-minute manual process. It should be a one-click affair that spins up an ephemeral sandbox per investigation. We are working on it.

Longer-horizon investigations. Some problems do not fit in a single session. They span weeks of state change and involve re-running the investigation against fresh data each time. That is a different AI workflow, closer to agentic tool-use than to pair debugging. We have not made it routine yet.

What is clear is that the boundary between “things we can take on” and “things we have to decline” has moved, and it will move again.

If You Need This Built

HighFlyer builds and fixes integrations, ERP customisations, and data-plumbing systems for New Zealand businesses. We use AI the way we would use a senior colleague: in the investigation, in the pattern recognition, in the simulation, but never as the engineer of record.

Tags

About the Author

Imesha Sudasingha

Co-founder & CTO

Imesha is the Co-founder & CTO at HighFlyer and a member of the Apache Software Foundation with 10+ years of experience across integration, cloud, and AI. He leads the AI-assisted engineering practice at HighFlyer and writes about production-grade integrations, ERP customisation, and software architecture.

A monthly note for SME operators

On technology, AI, and digitalisation. One real story, two trends, and one quick win each issue.

Recent Posts

Categories

You May Also Like

From 3 Hours to 11 Seconds: Rebuilding an ERPNext Shopify Inventory Sync

We kept bumping the timeout. First to 60 minutes. Then to 3 hours. It still timed out. The timeout was...

Read More

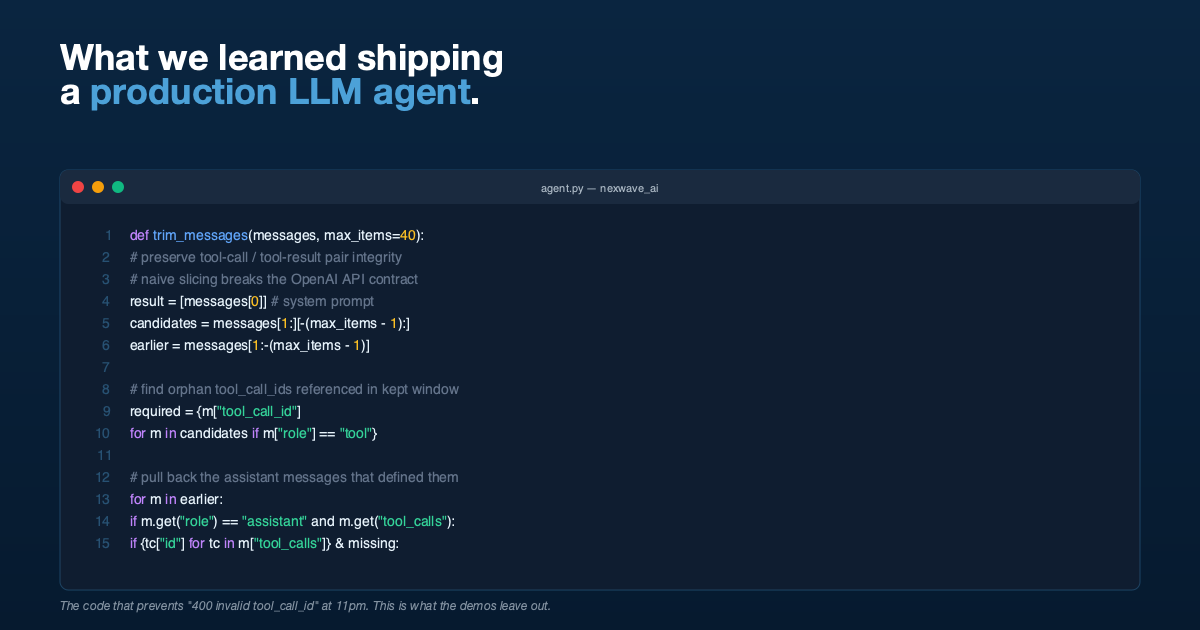

What We Learned Building a Production LLM Agent That Writes Its Own ERP Queries

Tool-call integrity during history trimming, delegated arithmetic, permission pass-through, and why demos lie. Lessons from shipping an agent into production.

Read More

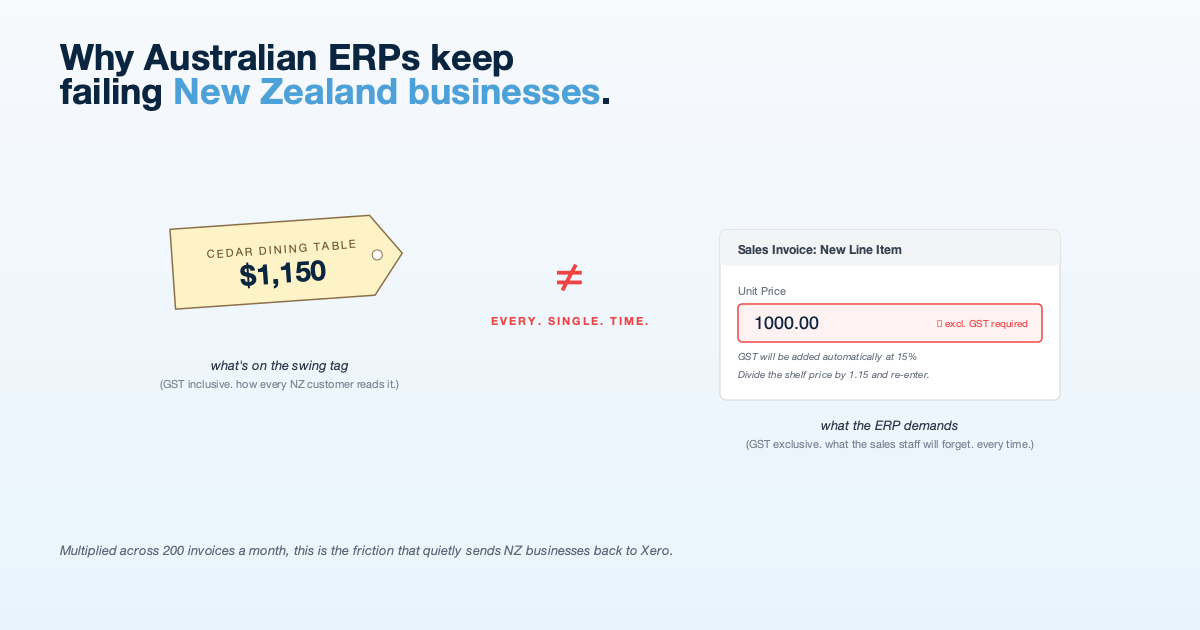

Why Imported ERPs Keep Failing New Zealand Businesses

NZ businesses think and invoice in GST-inclusive terms. Most cloud ERPs do not. The mismatch creates friction that shows up...

Read More